AI Change Management: A Practitioner’s Framework for Successful AI Adoption

AI change management is the structured approach to leading people, processes, and culture through the adoption of artificial intelligence. It goes beyond traditional change management because AI introduces deeper fears (identity, not just workflow), faster technology cycles, and higher uncertainty about outcomes.

Over the last few years, we’ve worked on dozens of AI projects across mid-market companies, and the same pattern keeps repeating: projects don’t fail because of bad algorithms or wrong tools. They fail because of people, process, and culture.

Back in 1982, Blumberg and Pringle proposed a formula that still holds:

Performance = Ability × Motivation × Opportunity

In the context of AI implementation, Ability is your measure of the AI’s effectiveness and impact. Opportunity is the time and resources freed up by AI systems. Motivation is where it all comes together, or collapses. With zero motivation, the entire formula goes to zero. No matter how powerful your AI, no matter how much opportunity it creates.

The numbers back this up. Research from Cutter Consortium puts AI project failure rates at over 80%, twice the rate of non-AI IT projects. Only 48% of projects succeed in moving from prototype to production. Informatica found that 43% of failures tie directly to data quality and readiness. Change management is consistently called “critical but often overlooked” across these studies.

This article is what 27 years of implementation experience, and specifically the last several years of AI implementation, actually looks like on the ground. We’ve distilled it into a 10-area framework that covers the full scope of what organizations need to get right.

What Makes AI Change Management Different from Traditional Change Management

Traditional change management for ERP rollouts, process changes, or org restructures is well understood. Kotter’s 8-step model, ADKAR, and other frameworks have been refined over decades. AI change management builds on those foundations but adds three specific dimensions.

The fear dimension is deeper. AI threatens identity, not just workflow. When you roll out a new ERP system, people worry about learning new software. When you roll out AI, people worry about whether they’ll still be relevant. That’s an existential concern, not a training concern. It requires a fundamentally different approach to communication and support.

The pace of change is faster. AI capabilities evolve month to month. By the time you’ve built consensus for one approach, the technology landscape has shifted. Traditional change management assumes a stable target state. AI change management requires adaptive planning that can absorb continuous evolution.

The uncertainty is higher. Even the implementers don’t always know exactly how the AI will behave until it does. Traditional software is deterministic: same input, same output. AI systems have a probabilistic quality that requires a different kind of organizational trust and a stronger feedback loop.

Research supports this: studies from multiple academic sources (lsst.ac, Science Direct, mbs.edu, Frontiers) show that people who understand AI are less fearful of job displacement than those who don’t. Education doesn’t just teach skills; it recalibrates the emotional response to AI. This finding runs through the entire framework below.

Frameworks like ADKAR (Awareness, Desire, Knowledge, Ability, Reinforcement) give you the what of change management. The 10-area framework that follows gives you the how for AI specifically.

The 10-Area AI Change Management Framework

We call this the 10-Area AI Change Management Framework because naming it matters. Named frameworks get remembered, referenced, and applied. Lists of tips don’t.

Area 1: Vision — Replace Mandates with Meaning

Every AI initiative needs a clear, compelling reason that connects to what people actually care about in their daily work.

Good vision statements sound like this:

- “Your Time, Your Choice” — AI handles the repetitive stuff you don’t enjoy doing anyway, so you can spend your time on the work that actually uses your brain and skills.

- “Stop Reinventing the Wheel” — AI remembers the solutions we’ve already figured out, so you’re not starting from scratch every time or hunting down who knows what.

- “Do Your Best Work, Faster” — AI takes care of the grunt work in your process, so you can focus on the creative and strategic parts where you actually add value, and get it done in less time.

The most common mistake here is defaulting to mandates instead of vision. (See the dedicated section below on why mandates almost always backfire.)

Write your vision statement in terms your team would use to describe the change to a friend. If it sounds like a memo from leadership, rewrite it. Once you have buy-in on vision, the next challenge is knowing which AI projects to tackle first.

Area 2: Urgency — Don’t Assume It’s Self-Evident

Urgency is the force that overcomes natural inertia and resistance to change. AI is everywhere in the news, so it’s tempting to assume urgency is built in. It isn’t. People are saturated with AI headlines, and saturation breeds numbness, not urgency. Real urgency connects to a specific business situation: a competitor who just deployed AI-powered customer service, a key employee retiring next quarter and taking decades of knowledge with them, a process that costs 40 hours per week that could cost 4.

The mistake most organizations make is assuming that because AI is a hot topic, their team already feels the urgency to change. They don’t. They feel overwhelmed, which is the opposite of urgently motivated.

Tie urgency to a concrete, time-bound reality your team can see and verify. “Our industry is changing” doesn’t create urgency. “Our largest competitor deployed AI-assisted quoting last quarter and cut their response time from 48 hours to 4” does.

Area 3: Leadership Commitment — Walk the Walk

Leaders need to actively model the behavior and learning they’re asking of their team. Leadership that’s truly committed to AI adoption uses AI themselves. They can speak to what it does well and where it falls short from firsthand experience. They show up to training sessions. They share what they’ve learned and what they’ve struggled with.

As Peter Drucker wrote in The Effective Executive: leadership isn’t about making decisions. It’s about being in service to your team, listening to their challenges, removing their roadblocks, and ensuring they have what they need to do their best work.

Where this falls apart: declaring AI a priority while never personally engaging with the tools or the learning process. Teams notice immediately.

Spend time with the AI tools yourself before rolling them out. Be able to speak credibly about what works and what doesn’t. Share your own learning curve openly.

Area 4: Listening — Bottom-Up Obstacle Discovery

Before prescribing solutions, engage with the people doing the work to understand what’s actually taking their time and where the real obstacles are.

One organization we worked with discovered through listening that their team’s biggest time drain wasn’t something AI needed to fix at all. People were getting interrupted throughout the day with ad-hoc questions. Simply batching all questions into a single daily list reduced individual workload by 4 hours per week. AI never entered the equation. First principles thinking, powered by listening, identified the real problem.

In Kotter’s 8-step methodology, listening is the tool for discovering where obstacles actually are, not where leadership assumes they are. If you’re not listening, you’ll never discover where the real obstacles lie.

The most common failure here is prescribing AI solutions before understanding the actual problems. AI is a tool. Focus on the problems first, then assess whether AI is the right fix.

Before any AI implementation, conduct structured conversations with the people doing the work. Ask: what takes the most time? What’s the most frustrating part of your day? What would you change if you could? The answers often surprise leadership.

Area 5: Coalition Building — Influencers, Experts, and Power Holders

Change sticks when it’s guided by a cross-functional group with credibility, knowledge, and authority. Effective coalitions include three roles: key influencers (people others trust and listen to), subject matter experts (people who understand the work deeply), and power holders (people who can allocate resources and remove obstacles). These aren’t always senior leaders. Sometimes your most important influencer is the person on the team everyone turns to with questions.

A common mistake is building the coalition only from enthusiasts. The most resistant person in your organization may be the most valuable coalition member once their concerns are addressed. Skeptics who convert become your strongest advocates because they’ve pressure-tested the approach.

Identify your key skeptic early and invest time understanding their resistance. Their concerns represent what many others are thinking but not saying. Address their objections and you address the silent majority’s.

Area 6: Alignment — AI Solves Their Problems, Not Just Yours

The value AI delivers needs to be framed in terms that matter to the people using it, not just to the balance sheet.

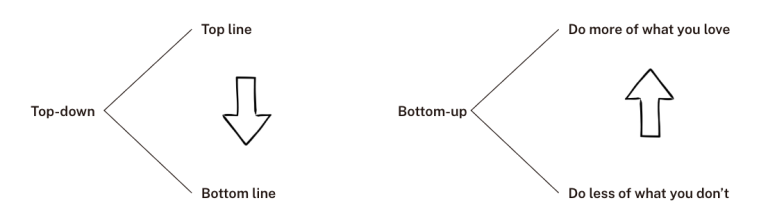

Companies think about AI value at the top of the spectrum:

- How can AI increase revenue?

- How can AI decrease costs?

People in the organization think about AI value differently:

- How can AI help me do more of the things I want to be doing?

- How can AI help me do less of the things I don’t want to be doing?

Both frames are valid. The mistake is communicating only in the first frame and expecting adoption in the second.

Where organizations get stuck is framing AI benefits in terms of company productivity targets and expecting individual buy-in. “Increase efficiency by 30%” doesn’t resonate with the person who just wants to stop copying data between spreadsheets for three hours every Friday.

For every AI initiative, write two benefit statements: one for the business case and one for the daily-work case. Lead with the daily-work case when communicating with the people who’ll use the system.

Area 7: AI Education — The Single Most Effective Way to Reduce Resistance

Structured learning helps people understand what AI is, how it works, what it can do, and critically, what it can’t do. This matters because there are at least ten common resistance types to AI adoption:

- Fear of losing their job

- AI creates professional insecurity

- Threat to sense of identity and self-worth

- Loss of control and autonomy

- Privacy and surveillance concerns

- Quality and reliability fears

- Ethical and moral concerns

- Overwhelm and cognitive load

- Workflow disruption anxiety

- Trust and transparency issues

That’s a lot to address. Education tackles the majority of them at once. Studies show that people who understand AI, even at a functional (non-technical) level, are significantly less worried about job security, inadequacy, overwhelm, and disruption. They start to see AI’s potential to help them, rather than experiencing it as an undefined threat.

Knowing AI’s limitations is especially empowering. When people understand what AI can’t do, they’re less likely to apply it where it will fail, more confident about where to apply it effectively, and more secure in their own value, because they can clearly see what AI still needs humans for.

Too many organizations train people on the tool without training them on the mindset. Teaching someone which buttons to press in an AI interface doesn’t address the identity-level concerns driving their resistance.

Start AI education with “what AI is and what it can’t do” before “how to use this specific tool.” The former addresses the emotional resistance; the latter addresses the skill gap. Both matter, but order matters.

Area 8: Safe Experimentation — Structured Sandboxes, Not Free-for-Alls

People need a governed space to explore, ask questions, and build experience with AI at their own pace. In one organization, a single person experimented with installing local AI models, training them on internal data, and then sharing what was possible with co-workers. That sharing generated confidence, new skills, and new ideas across the team. Experimentation, when structured, becomes a powerful form of peer education.

The risk is letting experiments run without governance. Ungoverned experiments create three risks: tools get adopted before being properly evaluated, AI systems get deployed without clear ROI, and experiments become de facto policy without proper assessment. Six months later you’re migrating off something nobody officially approved.

Set clear boundaries for experimentation: what data can be used, which platforms are approved, and how insights get shared and assessed before becoming policy. Structure the sandbox; don’t just open the doors.

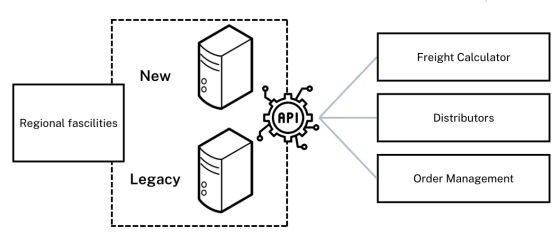

Area 9: Integrate, Don’t Disrupt — Parallel Implementation Over Big-Bang Rollouts

AI rollouts work best when the new system connects alongside existing workflows, not replacing them overnight.

Parallel implementation lets people use the new AI system optionally while the existing system continues to operate. This reduces risk, gives people time to build confidence, and provides real comparison data between old and new approaches. If the AI system fails or underperforms, you haven’t burned your bridge back.

A microservices approach to AI implementation also helps: release new features as contained, input-output optimized components that can be added and removed independently. Each component can be swapped, improved, or rolled back without affecting the rest of the system.

Treating AI deployment like a light switch (off to on) instead of a dimmer (gradual increase) is especially dangerous in environments where downtime is expensive.

Run parallel systems during initial rollout. Set a clear evaluation period (30, 60, 90 days) with defined success criteria before sunsetting the old system.

Area 10: Feedback Loops — Human and AI Self-Reflection

Continuous mechanisms for both people and AI systems surface what’s working, what isn’t, and what needs to change.

Human feedback involves structured check-ins, UX research, and open channels for people to share their experience with the AI system. What do they like? What do they wish it did? Will they actually use it?

AI feedback involves tying the system to performance metrics and enabling self-reflection. If the system isn’t hitting targets, give the AI the task of analyzing why and proposing improvements. This iterative learning capability is new territory. It goes beyond traditional software maintenance into genuine self-improvement cycles.

Treating change management as a pre-launch checklist instead of an ongoing loop is where adoption stalls. Companies that do this see adoption plateau within six months as initial enthusiasm fades and unresolved friction points accumulate.

Schedule formal feedback reviews at 30, 60, and 90 days post-launch, then quarterly thereafter. Include both system performance metrics and human experience data. Make people a part of the solution by acting on their feedback visibly.

The Mandate Trap: Why AI Mandates Almost Always Backfire

This topic deserves its own section because it’s the single most common mistake we see in AI change management, and the most avoidable.

Mandates sound like this:

- “Everyone must use ChatGPT for at least 3 tasks per week”

- “All customer service responses must be drafted by AI first”

- “20% of all tasks must be done by AI before the end of the year”

- “Use AI to write your reports going forward”

- “We’re replacing our documentation system with an AI chatbot”

These mandates don’t communicate a purpose or reason behind the change. And when you don’t create meaning for change, people instinctively assume the worst possible motivations: to replace me, to increase profits at my expense, to diminish my value.

The psychology is straightforward. Mandates signal “we don’t trust you to make good decisions about your own work.” That triggers defensive resistance, the exact opposite of what you need for successful adoption.

When mandates are appropriate: Narrow compliance or safety-critical situations where the process must be followed regardless of preference. “All patient data interactions must go through the encrypted AI system” is a mandate that makes sense because it’s about safety, not productivity.

For everything else: Reframe the mandate as a vision. Same desired outcome, different packaging.

- Mandate: “Everyone must use ChatGPT for 3 tasks per week”

- Vision: “We’re freeing up time for the work that actually uses your skills. AI handles the repetitive parts so you can focus on what you’re good at.”

If a mandate was passed down to you from above, the first step is to take that mandate and shift it from a checkbox into a vision for change. Make it inspiring and rewarding for your team.

AI Change Management for Manufacturing and Industrial Companies

Manufacturing environments add specific dimensions to AI change management that generic frameworks don’t cover.

The knowledge risk is higher. Retiring workers take decades of tribal knowledge with them. In manufacturing, AI change management isn’t just about getting people to adopt new tools. It’s about capturing and preserving institutional knowledge before it walks out the door. The urgency here is real and time-bound in a way that office environments rarely experience.

Workforce demographics require different approaches. Manufacturing workforces often skew older and more hands-on than office knowledge workers. The “your time, your choice” framing still works, but it needs calibration. Floor workers respond to concrete demonstrations more than abstract vision statements. Show them the AI reading a specification sheet and flagging an error that would have caused a rework. That lands differently than a slide deck about “digital transformation.”

Floor vs. office requires separate strategies. AI change on the shop floor and AI change in the back office are fundamentally different projects. Operators and managers have completely different resistance profiles, different daily workflows, and different definitions of “value.” A single change management approach across both groups will underserve one or both.

Integration with existing systems is critical. Manufacturing environments run on ERP, MES, and legacy machinery data. The “integrate, don’t disrupt” principle from Area 9 is especially important here, where downtime is measured in thousands of dollars per hour. Parallel implementation isn’t just good practice in manufacturing. It’s a financial necessity.

For companies in the Pacific Northwest manufacturing sector, these challenges are particularly acute as the region faces significant workforce transitions. Our industrial AI integration services are built around these realities.

Common AI Change Management Mistakes (And How to Avoid Them)

Across the implementations we’ve been involved in, these are the patterns that consistently derail AI adoption:

1. Launching without a vision. Jumping straight to tool selection and deployment without communicating why. People fill the silence with their worst fears.

2. Assuming urgency is self-evident. “AI is everywhere” doesn’t create organizational urgency. Specific, time-bound business realities do.

3. Letting early adopters run ungoverned experiments that become de facto policy. Enthusiasm is great. Enthusiasm without governance creates technical debt and trust issues.

4. Training the tool without training the mindset. A two-hour workshop on “how to use ChatGPT” doesn’t address the identity-level concerns driving 80% of the resistance.

5. Forgetting that Motivation × 0 = 0. Refer back to the Blumberg and Pringle formula. You can build the most capable AI system in the world. If people aren’t motivated to use it, performance stays at zero.

6. Treating change management as a one-time event. It’s an ongoing loop. Adoption plateaus and degrades without continuous feedback and adjustment.

7. Rolling out to the whole organization before feedback loops are in place. Small group, feedback, adjust, expand. That sequence matters.

How to Get Started: Assessing Your AI Change Readiness

Before diving into implementation, ask yourself and your team these questions:

- Can you articulate why AI, and why now, in terms your team would use? If the answer is only in business metrics (“reduce costs by 20%”), you have a vision gap.

- Do you know what your team’s biggest daily frustrations are? Not what you think they are, but what they’ve actually told you. If you haven’t asked recently, start there.

- Who are your informal influencers? Not the org chart leaders, but the people others actually listen to. These are your coalition starting points.

- What’s your team’s current understanding of AI? If most people couldn’t explain the difference between an AI chatbot and a machine learning model, education needs to come before implementation.

- Do you have a feedback mechanism in place? If there’s no structured way for people to share what’s working and what isn’t, build that before you build the AI system.

Before managing the change, you need to understand where your organization stands. Our AI Readiness Framework walks through a structured assessment process.

For organizations that want a facilitated version of this exercise, with a team of people in the room working through these questions together, that’s what our AI Whiteboarding session delivers. It’s 90-120 minutes of structured strategy work, not a sales pitch.

Bringing It Full Circle

AI change management matters because performance is multiplicative, not additive. Blumberg and Pringle got it right in 1982, and the formula applies directly to AI implementation today:

Performance = Ability × Motivation × Opportunity

Your AI system’s Ability can be extraordinary. The Opportunity it creates can be substantial. But Motivation is the multiplier that makes everything else matter, or makes everything else irrelevant.

These 10 areas aren’t a pre-launch checklist. They’re the ongoing practice of building and sustaining the motivation that turns AI investment into AI adoption. The companies that succeed with AI will be the ones that invest in both the technological and human elements of the equation.

Explore our AI solutions to see how we help organizations navigate AI implementation from strategy through adoption.

Frequently Asked Questions: AI Change Management

What is AI change management?

AI change management is the structured approach to leading people, processes, and culture through AI adoption. It builds on traditional change management (Kotter, ADKAR) but addresses three additional dimensions: identity-level fear that goes beyond workflow disruption, faster-evolving technology requiring adaptive planning, and higher outcome uncertainty requiring stronger feedback loops. The goal is ensuring AI delivers value by maintaining the human motivation that makes adoption possible.

Why do AI implementations fail?

AI implementation failure rates exceed 80%, twice that of non-AI IT projects, according to research from Cutter Consortium. Common factors include data quality issues (43% of failures per Informatica) and the gap between prototype and production (only 48% of projects make that transition). Change management is consistently identified as the overlooked factor. Using Blumberg and Pringle’s formula: if Motivation equals zero, Performance equals zero regardless of the technology’s capability.

What is the difference between traditional change management and AI change management?

Traditional change management addresses workflow disruption: learning a new system, following a new process. AI change management adds three layers: (1) identity-level fear where people worry about relevance and replacement, not just retraining; (2) a technology cycle that evolves monthly, requiring adaptive rather than fixed planning; and (3) probabilistic system behavior that demands stronger feedback loops and a different kind of organizational trust.

How do you get employee buy-in for AI adoption?

Start with vision, not mandates. Listen first to understand what’s actually consuming people’s time. Frame AI as the solution to their daily frustrations, not the company’s productivity targets. Invest in education early, as research consistently shows that AI-literate employees are significantly less fearful of job displacement. Build a coalition that includes skeptics, not just enthusiasts.

How long does AI change management take?

Initial adoption for a focused implementation typically takes 3-6 months. Feedback loops and culture adaptation continue well beyond launch. Companies that treat change management as a pre-launch checklist see adoption plateau within 6 months as unresolved friction points accumulate. The 10-Area framework is designed as an ongoing practice, not a one-time project.

What role should leadership play in AI change management?

Leadership must model the behavior. Use the AI tools yourself before asking others to. Be able to speak credibly about what works and what falls short. Drucker’s leadership-as-service framing applies directly: your job is to surface resistance, remove obstacles, and ensure people have what they need. Communicating purpose (vision) rather than mandating compliance is the single highest-leverage leadership action in AI change management.

Sources: Blumberg & Pringle (1982) “The Missing Opportunity in Organizational Research”; Kotter’s 8-Step Change Model (kotterinc.com); Cutter Consortium research on AI project failure rates; Informatica data quality study; AI education and fear reduction research: lsst.ac, sciencedirect.com, mbs.edu, frontiers.org; Drucker, “The Effective Executive”; Harvard Business School “Building Change Fitness” (2025).