How to Prioritize AI Projects in 2026: A 5-Criteria Scoring Framework

You have a dozen AI ideas and a budget for two. The customer service chatbot sounds safe. The predictive maintenance system sounds transformative. Your VP of Engineering wants to start with data infrastructure. Your CEO wants something visible by Q3.

This is where most companies stall. Not because they lack AI opportunities, but because they lack a reliable way to compare them. When every project has a compelling internal champion and a plausible ROI story, gut instinct produces politics, not progress.

This article walks through a scoring framework that makes the comparison objective. You will leave with a method to rank any set of AI initiatives across five dimensions. You will also get a simple matrix you can fill out in a single planning session and a process for turning scores into a sequenced roadmap.

The 5-Criteria Scoring Matrix

Here is a quick-score version of the framework. If you want to jump straight to the scoring, use this table. The rest of the article explains the thinking behind each dimension and how to weight them for your situation.

| Dimension | 1 (Low) | 5 (High) |

|---|---|---|

| Business Impact | Marginal improvement to a non-critical process | Direct revenue or cost impact on a core operation |

| Feasibility | Requires new infrastructure or unproven technology | Uses existing tools, data, and team capabilities |

| Data Readiness | Data does not exist or is scattered across systems | Clean, structured data is already accessible |

| Strategic Alignment | Loosely connected to business objectives | Directly advances your top strategic priority |

| Speed to Value | 12+ months before any measurable return | Measurable results within 1 to 3 months |

Score each project 1–5 on every dimension, total them up, and compare. A project scoring 22/25 with strong feasibility and speed is a better starting point than one scoring 18/25 that requires infrastructure you do not have yet. The full explanation is in the scoring matrix section below.

Want to watch this as a video instead? Here is the video version:

Start With Your Strategic Objectives

Before ranking any AI projects, you need clarity on what you are trying to achieve. AI can address many problems, but without explicit priorities, you chase initiatives that do not align with your actual business needs.

Common strategic objectives include:

Financial Goals: Cost reduction is the dominant driver right now. If your bottom line needs immediate relief, optimization projects that reduce operational costs should rank higher than experimental innovation.

Innovation and Differentiation: New revenue streams or competitive advantages. These projects change how you compete in your market.

Risk Mitigation: If employees are using AI tools without guidelines, or if you operate in a regulated industry, governance might be your top priority before anything else.

Problem Resolution: Specific operational bottlenecks causing daily friction. The “if we could just solve this one thing” projects everyone already knows about.

Culture and Talent: AI projects that make your company more appealing to skilled workers or reduce frustrating manual work support talent retention goals.

Knowledge Democratization: Breaking down information silos. When critical knowledge lives only in certain people’s heads, AI can capture and distribute that expertise across your organization.

Most companies have multiple objectives. That is fine. Rank them. Make it clear that Financial is priority #1, Problem Resolution is #2, and so on. This ranking becomes crucial when comparing projects later.

Check Your Foundation First

Sometimes the best “AI project” means fixing the basics that will make AI projects actually work.

We wrote an entire piece on assessing your organization’s AI readiness that covers this in depth. The core insight: you are only as strong as your weakest link.

Evaluate your organization across seven domains: Strategy, Product/Value tracking, Governance, Engineering capability, Data infrastructure, Operating Model, and Culture & People. If you score high on technical capability but low on governance, you are creating risk. If you have great strategy but poor data infrastructure, AI projects will struggle.

Before committing resources to an ambitious initiative, honestly assess where you stand across these domains. Your weakest area becomes your highest priority.

In this example, an organization needs to focus on value generation and governance. Addressing these two areas first raises the overall bar because maturity is limited by the weakest domain.

Another way to assess readiness: look at how AI integrates into your operations. Your maturity level determines what projects make sense:

- Foundations: Basic AI literacy, learning to prompt effectively

- Augmented Services: Human doing the work, AI helping

- Integrated AI: AI doing baseline work, human reviews

- AI-First: AI handles agentic tasks, human monitors for quality

Quick Wins vs. Long-Term Transformation

Once you understand your objectives and readiness level, decide on your approach: immediate validation or building toward larger transformation?

Quick Wins help you:

- Prove AI delivers value quickly

- Build organizational confidence

- Generate momentum for larger initiatives

- Show fast returns to leadership or boards

North Star projects offer:

- Compounding value over time

- Lower long-term risk through thoughtful design

- Stronger competitive differentiation

- More effective change management

Select the right approach based on what matters to your business. If you choose a North Star approach, make it modular. Break the vision into discrete projects that each deliver value independently.

For example, a manufacturing client envisions an “AI Engineer” that generates quotes, answers technical questions, and automates routine engineering tasks. Rather than building everything at once, we are tackling quote generation first, then expanding. Each phase delivers measurable value while building toward the larger vision.

Why Sequencing Matters More Than Selection

Choosing the right projects matters. Choosing the right order matters just as much.

We see a pattern with organizations that start with their most ambitious initiative first. The project is complex, touches multiple departments, and takes longer than expected. Six months in, the team is exhausted, the budget is strained, and leadership starts questioning whether AI was worth the investment.

Compare that to starting with a focused, achievable project: a chatbot that handles 40% of routine customer inquiries, or an automation that saves the operations team eight hours a week. The win is visible within weeks. Leadership sees tangible returns. The team builds confidence. When it is time for the larger transformation project, you have budget credibility and internal expertise behind you.

Most of those are not bad ideas. They are good ideas launched in the wrong order, before the organization had the experience, data, or infrastructure to support them.

Prove that AI delivers value in your specific environment before scaling up. Each successful project teaches your team how to work with AI, surfaces data quality issues, and builds the case for the next investment.

Consider a hypothetical mid-market logistics team in 2026 with three candidate AI projects on their backlog: predictive routing, automated claims processing, and a customer-facing chatbot. Score them with the 5-criteria matrix and the chatbot lands lowest on Business Impact (1) but highest on Data Readiness (5) and Speed to Value (5). Start there, ship in eight weeks, free up the support team to handle higher-value work, and use the operational lessons to scope the claims processing project. The predictive routing project — the one with the highest theoretical ROI — gets moved to Q4 because the data infrastructure is not ready. Twelve months earlier, that project would have been the obvious starting point and would likely have stalled at integration.

How This Framework Compares to Gartner and CIO-Style Approaches

The 5-dimension scoring matrix above is one of several prioritization approaches circulating in the enterprise AI space. Here is how it compares to two of the most widely referenced alternatives.

Gartner’s approach (as reflected in their strategic planning guides and the 2025 agentic AI analysis) weights feasibility and business value heavily, typically using a 2×2 or 3×3 priority matrix. The strength is speed: a leadership team can slot projects into quadrants in under an hour. The weakness is that a 2×2 collapses distinct concerns (data readiness vs technical complexity, for instance) into one “feasibility” axis, which hides the specific gap that would keep a project from succeeding.

CIO-style frameworks (the ones most commonly taught in IT governance programs) tend to lead with business-IT alignment and risk-adjusted ROI. Projects get filtered through portfolio governance, then scored on strategic fit, financial return, and operational risk. This works well in mature IT organizations with formal portfolio processes. It works less well in companies that do not have AI capability established yet and need to learn as they go.

The 5-dimension approach above sits between them. It keeps the scoring granular enough to identify the specific bottleneck (data readiness as its own dimension, separate from feasibility) without requiring a formal portfolio governance function to run it. Here is the side-by-side:

| Approach | Scoring Granularity | Strength | Weakness | Best Fit |

|---|---|---|---|---|

| Gartner 2×2 | Low (2 axes) | Fast stakeholder alignment | Hides which specific gap blocks a project | Executive workshop framing |

| CIO Portfolio Governance | High (formal scoring rubric) | Financial rigor, risk adjustment | Requires established governance function | Mature IT organizations |

| 5-Dimension Scoring (this article) | Medium (5 dimensions, 1–5 scale) | Surfaces the specific bottleneck blocking each project | Less useful for very large portfolios (20+ projects) | Mid-market and emerging AI programs |

Which approach is “best” depends on the decision you are making. A 100-person company choosing between three AI pilots does not need portfolio governance. A 10,000-person enterprise with a governance function already in place does not need to invent a scoring rubric from scratch. The 5-dimension approach is most useful in the middle: companies that need more granularity than a 2×2 provides, but do not have the organizational overhead for a formal portfolio process.

Whatever framework you use, the underlying principle holds. The 40%+ cancellation rate Gartner projects is not a scoring problem. It is a sequencing problem. The scoring is input to the conversation. The conversation is where the decision actually gets made.

Five Quick-Win Categories With Consistent Returns

If you want to start with quick wins, these five areas consistently show high returns with reasonable implementation timeframes:

- Customer Service Automation: Chatbots and support systems that handle routine inquiries

- Marketing and Sales Content: AI-assisted content creation for SEO, social media, and campaigns

- Internal Knowledge and Productivity: Making tribal knowledge accessible and accelerating routine tasks

- Administrative Workflows: Automating repetitive processes that consume staff time

- Quality Control and Inspection: AI-powered checks that catch issues faster than manual review

These areas work well as starting points because they address existing processes you already understand, require less specialized expertise, build trust through visible improvements, and create data and processes that support future initiatives.

Do not chase quick wins because they are easy. If your business faces a crisis that requires longer-term transformation, quick wins will not solve it. Sometimes you need to go deep.

Map Your Constraints Before Ranking

Every organization has boundaries. Ignoring them leads to failed projects.

Map these constraints before ranking projects:

Time and Budget Limits: What is the maximum you are willing to invest? If a project exceeds these limits, can you break it into smaller phases?

Risk Tolerance: A 5% chance of complete failure might be unacceptable even if the potential upside is high. Know your threshold.

Regulatory and Compliance Requirements: Legal limitations can dramatically increase project complexity. One client discovered halfway through planning that compliance requirements tripled the scope. Better to know upfront.

ROI Minimums: Some projects might be worthwhile in isolation but do not clear your organization’s return threshold. Be explicit about what ROI you need.

External Dependencies: Vendors, partners, or systems outside your control can push a project beyond your constraints.

Organizational Capacity: How many projects can you realistically manage simultaneously? We see companies try to run five AI initiatives when they only have bandwidth for two.

The Six Ranking Criteria

We evaluate initiatives across six criteria:

1. Goal Alignment

How well does this project support your stated objectives? If reducing costs is your #1 priority, a project that offers strong cost reduction ranks higher than one focused on innovation, even if the innovation project sounds more exciting.

With priorities of Financial (1), Problem-solutions (2), and Risk mitigation (3), “Customer service automation” is better aligned than the other two projects.

2. Constraint Fit

Does this project fit within your constraints? If it requires 18 months and you need results in 6, it either needs to be broken down or moved aside for now.

3. Urgency

Some problems cannot wait. If a key employee is retiring in six months and taking critical knowledge with them, capturing that knowledge becomes urgent regardless of ROI calculations.

4. Implementation Effort

Lower effort does not automatically win. But when comparing two projects with similar returns, the one requiring less effort rises to the top. Effort includes technical complexity, data requirements, change management needs, and integration challenges.

5. Expected Impact

What measurable improvement does this project deliver? Time saved, money earned, problems solved, knowledge democratized. Quantify this as specifically as possible.

6. Strategic Factors

These are the intangibles. A project might support an important partnership, align with competitive positioning, or create capabilities that enable future initiatives. These factors tip the scales between otherwise similar projects.

The Scoring Matrix: From Criteria to Numbers

The six criteria above give you a qualitative framework. To make it actionable in a room full of stakeholders with competing priorities, you need numbers.

Score each project on five dimensions, 1 through 5. This is the same table from the top of the article, now applied to a concrete comparison.

Here is what this looks like with three projects a mid-size manufacturer might be comparing:

| Project | Impact | Feasibility | Data | Alignment | Speed | Total (/25) |

|---|---|---|---|---|---|---|

| Customer service chatbot | 4 | 4 | 3 | 5 | 4 | 20 |

| Predictive maintenance | 5 | 2 | 2 | 4 | 2 | 15 |

| Quote generation | 4 | 3 | 4 | 4 | 3 | 18 |

The predictive maintenance system has the highest single-dimension score (Impact: 5), but ranks last overall because the data infrastructure is not ready and the timeline stretches past a year. The chatbot wins on total score because it is feasible with current resources and delivers value quickly.

This is not the final decision. It makes the conversation objective. When someone advocates for predictive maintenance, point to the specific dimensions where it falls short: what would it take to raise Feasibility from a 2 to a 4? That turns a debate into a planning conversation.

One nuance: weight the dimensions based on your situation. If your organization has strong data infrastructure but limited budget, weight Feasibility higher. If you need to demonstrate value to a skeptical board, weight Speed to Value higher.

Building your prioritization shortlist?

If you have identified a few candidates and want help scoring them against your strategic objectives, let’s have a conversation. Our AI Whiteboarding session walks through the full scoring framework in 90 minutes.

Translating Value Into Comparable Terms

Ranking requires making projects comparable. That means translating different types of value into common terms.

For optimization projects (reducing time or cost), quantification is straightforward:

- Current weekly hours spent on task X → hours saved with AI

- Current error rate causing rework → errors prevented with AI

- Current cost per transaction → cost reduction with AI

For growth projects (new capabilities, new revenue), quantification is harder but still necessary:

- Estimated customer acquisition from new capability

- Projected conversion rate improvements

- Competitive advantage that protects existing revenue

The key steps:

- Interview stakeholders who actually do the work or manage the process

- State your assumptions explicitly so they can be validated

- Quantify in terms of money and time whenever possible

- Document the risks that could prevent you from achieving these returns

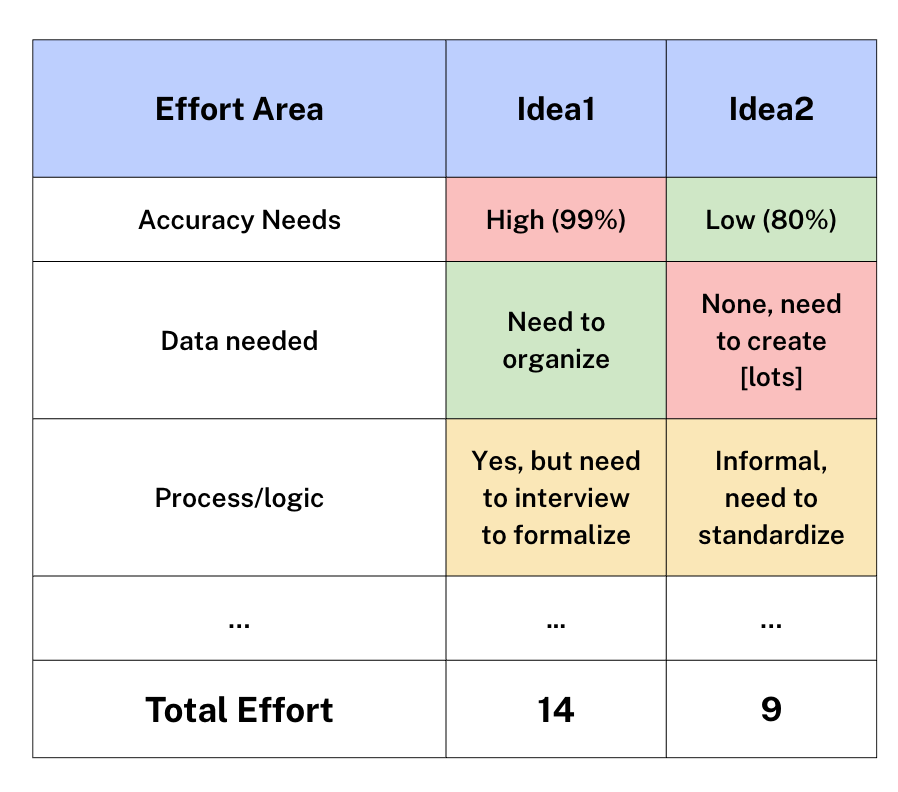

When documenting assumptions, note the accuracy level you need. An AI system that saves time but still requires human review only needs 80% accuracy. One that operates autonomously might need 99%+. Higher accuracy requirements mean more effort, which affects your ranking.

Optimization vs. Growth: Current Economic Reality

Economic conditions matter when prioritizing. Right now, most businesses are in optimization mode.

Optimization projects focus on reducing operational costs, eliminating wasted time, solving friction points, and improving efficiency of existing processes.

Growth projects focus on new revenue sources, market differentiation, competitive advantage, and unmet customer demand.

Optimization projects are generally easier to implement because you are improving something you already understand. The processes exist, the data exists, and ROI is straightforward to calculate.

Growth projects carry more risk. You are building new capabilities or entering new markets. The expertise might not exist in-house. You need market validation to confirm the opportunity.

Companies that only optimize will fall behind when the economy shifts. Be realistic about which projects fit your current environment and risk tolerance.

Scoring Effort and Impact

To create an objective ranking, score both the effort required and the impact delivered.

Effort factors to evaluate:

- Accuracy requirements: Higher accuracy = more effort

- Data availability: Creating data from scratch is high effort; organizing existing data is medium

- Process definition: Documented processes are easier to automate than tribal knowledge

- Multiple roles or functions: Each additional role increases complexity

- Multi-modal steps: Switching between task types (scraping, document processing, calculations) adds effort

- Risk boundaries: Safeguards needed to prevent problems

- Change management: Training and adoption support required

- Security and compliance: Regulatory requirements add significant effort

- Integrations: Each API or system connection increases complexity

- Dependencies: Prerequisites that must be completed first

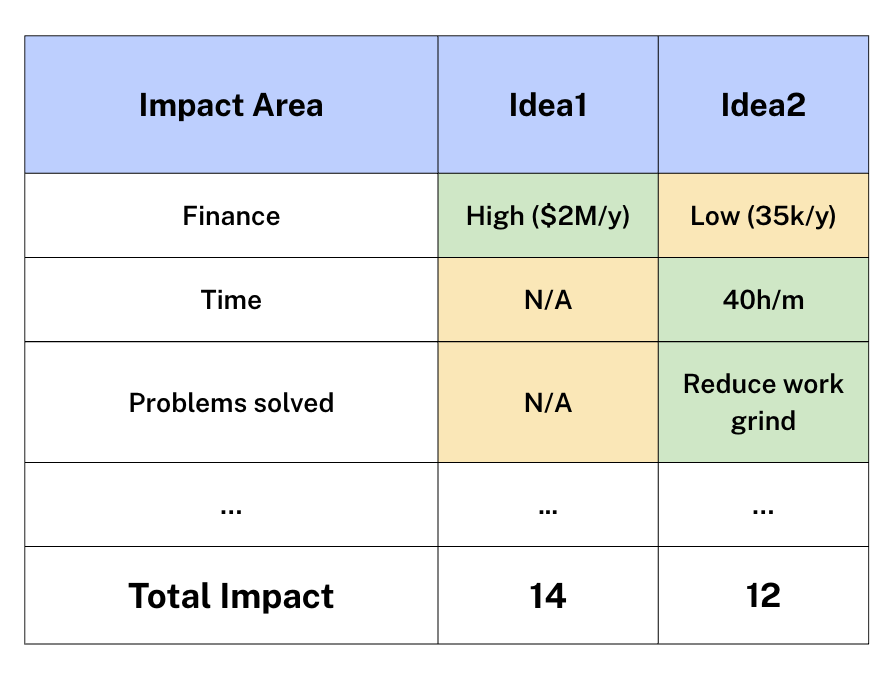

Impact factors to evaluate:

- Financial impact: Money saved or earned

- Time impact: Hours freed up

- Problems solved: Pain points eliminated

- Innovation value: Competitive differentiation created

- Culture impact: Employee satisfaction improvements

- Knowledge democratization: Information made accessible

- Priority alignment: How well it fits your stated objectives

- Risk reduction: Risks mitigated or eliminated

- Strategic value: Long-term positioning benefits

- Time to return: How quickly you see results

- Emergent value: Whether this project powers future initiatives

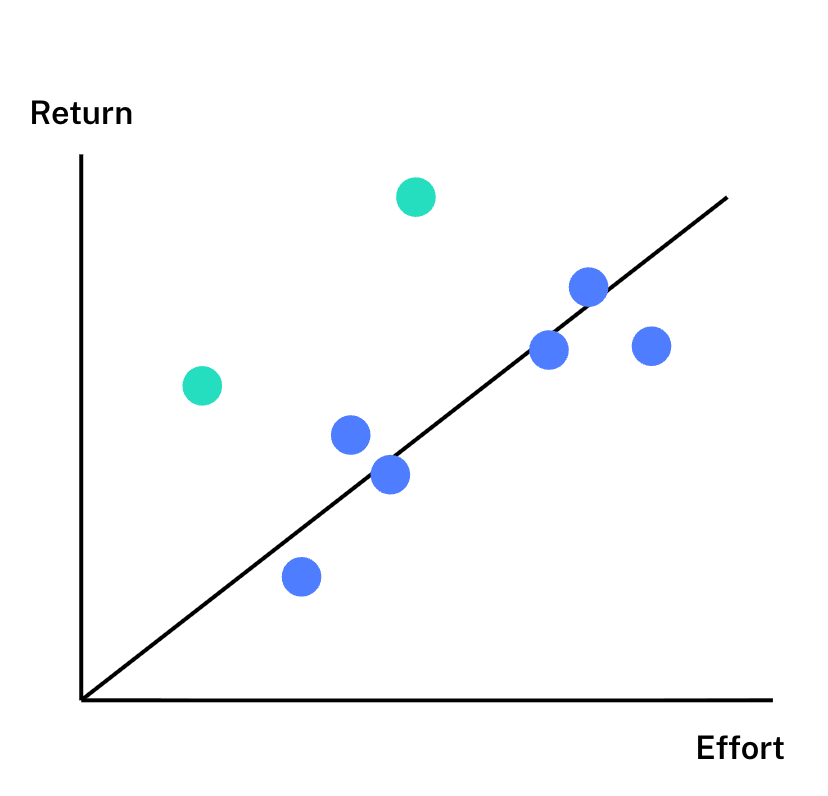

Plot projects on an Effort vs. Return graph. Projects above the trend line offer better returns than their effort suggests. Projects below the line require disproportionate effort. For quick wins, focus on low-effort projects above the line.

The Four Requirements Every AI Project Needs

Regardless of ranking score, a project will not succeed unless it meets four fundamental requirements:

- Solves one problem and does one job: Each project should have a clear, focused purpose.

- Has a clear process or logical framework: Either documented already, or definable as part of the project. AI works best when decision logic is explicit.

- Has supporting data: Either data exists, or you have a plan to create it during implementation.

- Has known technical limitations: You understand the challenges and how you will address them.

Missing any of these four dramatically increases project risk.

The Human Side of Prioritization

Technical frameworks help you prioritize projects. Actually implementing them requires addressing the human side.

I wrote a comprehensive guide on AI change management that covers this in depth. The core principle: the most sophisticated AI system fails if people will not use it or actively resist it.

Education reduces resistance. People who understand AI (what it can do AND what it cannot do) are significantly less afraid of job replacement and more willing to adopt AI systems. When people learn AI’s limitations, they feel empowered rather than threatened.

Frame projects around team benefits, not just metrics. Leadership naturally thinks about revenue and costs. Also frame improvements in terms of what your team gains: eliminating 10 hours of repetitive work, removing tedious manual tasks, freeing people for creative and analytical work.

Integration beats disruption. Projects that augment existing workflows face less resistance than those requiring complete process changes. Run old and new approaches in parallel until people are comfortable.

Include resistors in planning. The people most resistant to change often become the strongest advocates once their concerns are addressed. They frequently speak for the broader team’s worries.

If two projects score similarly on technical merit, choose the one with better change management prospects. A slightly lower-ROI project that succeeds beats a higher-ROI project that fails due to poor adoption.

Risk Categories to Evaluate

Every AI project carries risks. Document them honestly.

Technical Feasibility & Performance: Can the AI actually do what you need? Do you have the technical skills? Will model accuracy meet requirements?

Security & Compliance: Data privacy concerns, regulatory requirements, legal constraints.

Adoption & Change Management: Will people use it? Do they have training? Is there organizational resistance?

Project Design & ROI: Can you measure returns? Is this aligned with priorities? Does the data readiness support success?

Business Continuity: What happens if a vendor disappears? Do you have resources to maintain this long-term?

Ethical & Reputation: Could this create bias issues? Are there transparency concerns?

Quality & Expectations: Will it meet stakeholder expectations? Is the uncertainty around outcomes acceptable?

High-risk projects need stronger expected returns to justify the risk. Make risk assessments explicit in your ranking so everyone understands the trade-offs.

The Decision Matrix: Bringing It Together

With all this information gathered, you can create a comprehensive project comparison.

Your decision matrix should include:

- Project name and benefit summary

- Monetary and/or time benefits

- Strategic importance (goal-fit)

- Impact/Effort ratio

- Key assumptions

- Risk assessment

This gives you an objective basis for discussion. When someone champions their favorite project, you can show exactly why a different one ranks higher based on criteria the team agreed on.

Building Your AI Roadmap

Once you have ranked projects, evaluated change management, and documented risks, translate everything into an execution roadmap.

Your roadmap should show:

- Start and end dates for each project

- Dependencies between projects

- Measurement points for ROI assessment

- Status tracking (approved, tentative, planned)

Aim for 6–12 month ROI on initial projects. AI is moving too fast to bet on 3-year returns for your first initiatives. If you have larger projects, break them into phases with measurable returns under 12 months each. (24 months for large companies or projects is acceptable if well managed and planned.)

The roadmap brings together strategic objectives, readiness assessment, constraints, rankings, change management, and risk into a single plan.

Example roadmap showing a 3-month delay until first release, followed by monthly ROI checkpoints and quarterly strategic review sessions.

Your Next Steps

Strategic prioritization means finding the right projects for your organization’s current situation, objectives, and constraints, then building a roadmap that systematically addresses them.

- Clarify your strategic objectives and rank them explicitly

- Assess your AI readiness across the seven domains — your weakest areas might need attention first

- Identify your constraints to determine which projects are feasible and which need to be broken into smaller pieces

- Evaluate potential projects using the scoring matrix

- Consider change management and risks for each prioritized project

- Build your roadmap with clear dependencies, measurement points, and realistic timelines

We hope you found this useful for your organization’s planning. Reach out if you have questions about applying this process, feedback, or requests for future content. Sign up for our newsletter below to get our latest posts, and you can follow Sebastian on LinkedIn.

Frequently Asked Questions

What criteria should I use to prioritize AI projects?

Evaluate each project across five core dimensions: business impact (revenue or cost effect), feasibility (technical complexity and available resources), data readiness (whether the data exists and is accessible), strategic alignment (how directly it supports your top business priorities), and speed to value (how quickly you will see measurable results). Weight these dimensions based on your specific situation. A company under budget pressure should weight feasibility and speed higher, while one focused on competitive positioning should weight strategic alignment and impact higher.

How do I know if an AI project is worth pursuing?

Identify the specific metric the project will move. If you cannot articulate what will improve and by how much, the project is not ready for investment. Then validate the business value before committing: run a lightweight proof of concept, simulate the process manually, or test a small slice with existing tools. This costs a fraction of full implementation and prevents you from discovering halfway through that the underlying assumptions were wrong.

Should I start with quick wins or transformational AI projects?

In most cases, start with quick wins. They build organizational trust, demonstrate measurable ROI, and develop internal capability that supports larger initiatives. Starting with transformation carries real risk: high complexity, long timelines, and organizational fatigue before results appear. If your business faces a genuine crisis that only structural change can address, quick wins will not solve it. The default should be proving value first and scaling from there.

How many AI projects should a company pursue at once?

For mid-size organizations, two to three active AI initiatives at a time is the practical ceiling. Each requires focused attention from people with other responsibilities. Running five shallow pilots simultaneously tends to produce five mediocre results. Running two projects with proper resources and clear ownership tends to produce two successful implementations that justify the next round.

What makes an AI project high priority versus low priority?

High-priority projects have clear quantifiable business value, moderate technical feasibility, accessible data, strong strategic alignment, a realistic path to results within three to six months, and an internal champion. Low-priority projects typically have unclear ROI, require infrastructure that does not exist, depend on uncollected data, or lack an internal sponsor. A project can have enormous potential value and still be low priority if the prerequisites are not in place.

Related Reading

- AI Readiness Assessment: The 5-Stage Model — Assess your organization across seven domains before committing to AI projects.

- AI Change Management — The people side of AI adoption that determines whether good implementations get used.

- AI Risk & Security Assessment — Evaluate security and compliance risks before deploying AI in regulated environments.

From scoring to roadmap

Scoring projects on paper is the first step. The real test is validating those scores against your readiness, data infrastructure, and team capacity. Talk to us about building a roadmap that accounts for all the variables, or explore our AI Whiteboarding service for a structured 90-minute planning session.