Why Your AI Model Choice Matters Less Than Your System Design

The Model Obsession Is Costing You Money

Somewhere right now, a leadership team is three months into comparing GPT-5 against Claude 4 against Gemini for their next AI initiative. They have spreadsheets. They have benchmark scores. They have opinions from every vendor in the space.

They have not discussed how data flows into the system, what happens when it produces bad output, or who is responsible when something breaks at 2 a.m.

This pattern shows up everywhere. And it helps explain a striking number: 95% of generative AI pilots fail to move into production, according to research from MIT’s NANDA initiative. The failures are not about model quality. They are about everything surrounding the model.

CDO Insights research via Informatica found that 43% of AI projects fail on data readiness alone. Not model capability. Data readiness. The information feeding the system was wrong, incomplete, or inaccessible, and no amount of model sophistication could compensate.

McKinsey’s assessment is blunt: redesigned processes, not model choice, create the most enterprise impact. Gartner goes further, projecting that more than 40% of agentic AI projects will be scrapped by 2027 for lack of clear value.

These are not model problems. They are system design problems dressed up as technology decisions. And the gap between recognizing this intellectually and acting on it operationally is where most AI initiatives stall.

Model selection matters. It’s just not the first decision, and it’s not the decision that determines whether the implementation succeeds.

What Actually Determines AI Implementation Success

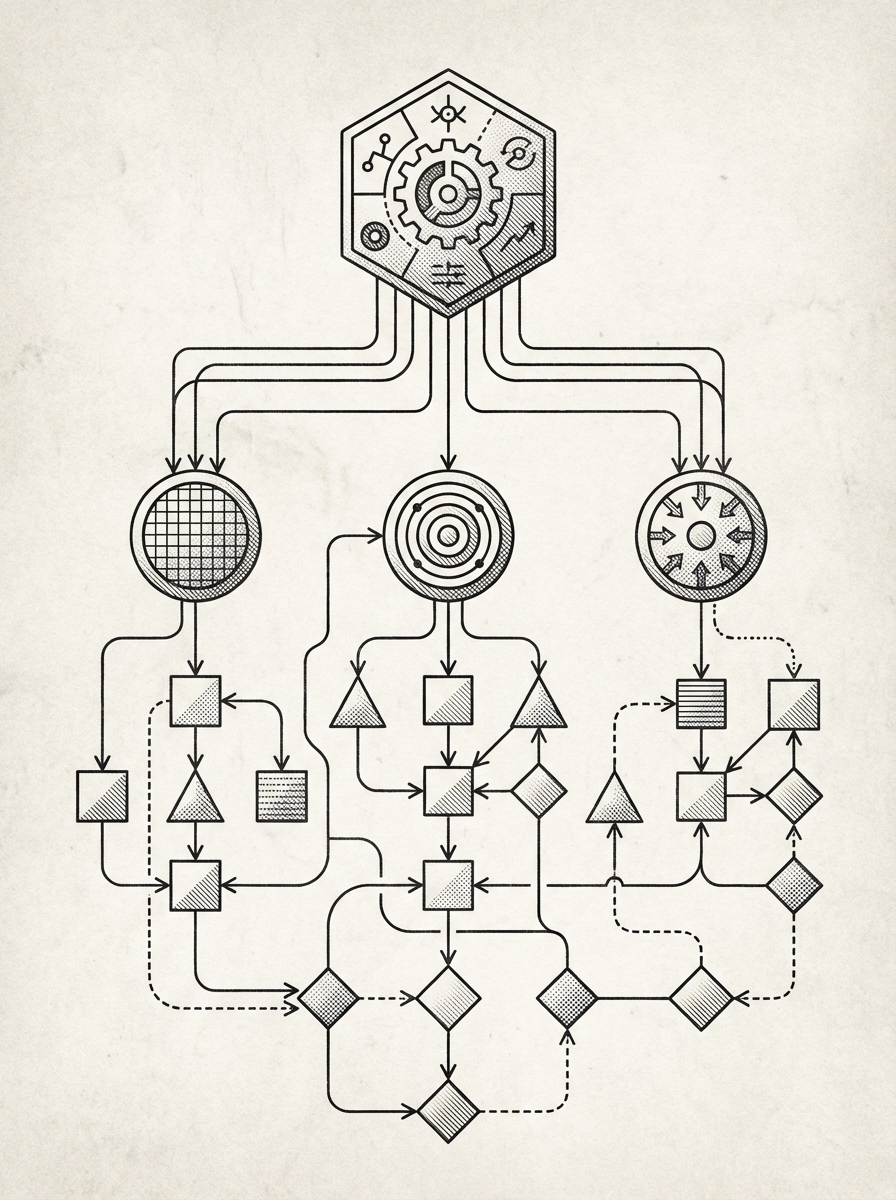

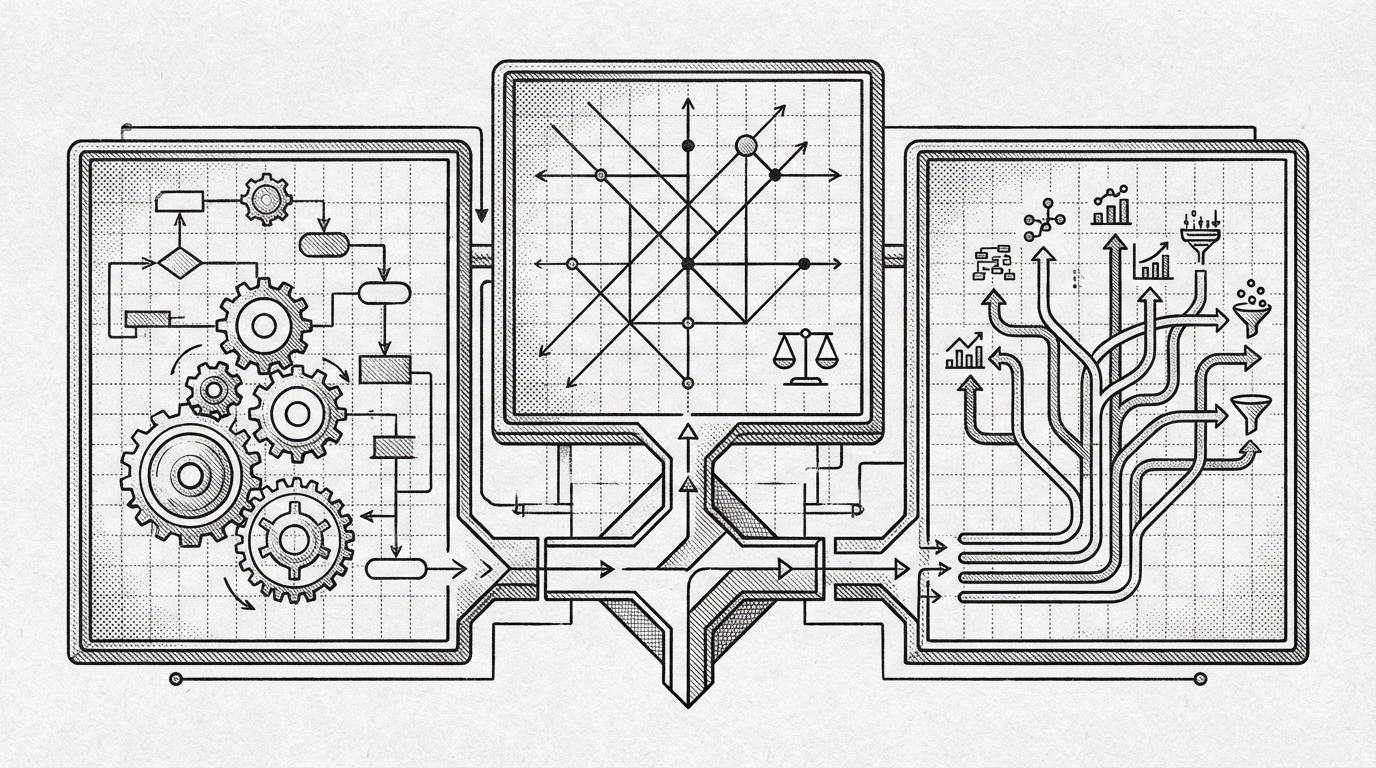

The model is one component in a system. A critical one, but its contribution to success or failure is smaller than most executives assume. Five architectural decisions carry more weight.

1. Orchestration Architecture

How tasks get decomposed, delegated, and coordinated determines what the system can actually accomplish. A single model handling everything hits limits quickly. Complex business processes involve research, analysis, decision-making, execution, and verification. Each step has different requirements. A well-orchestrated system breaks these apart. It routes each step to the right capability, manages dependencies, and reassembles results into something coherent.

Orchestration also determines resilience. When one step fails, does the entire process crash, or does the system retry, route around the failure, or escalate to a human? That behavior lives in the orchestration layer, not the model.

The evidence for this is concrete. In the ARC-AGI-2 benchmark, Poetiq improved scores from 52.9% to 75% through better orchestration alone. Same underlying models. No architectural breakthroughs. Just smarter task decomposition and coordination. The orchestration layer, not the model, created the performance jump.

2. Data Pipeline Quality

Every AI system is only as good as the information feeding it. This sounds obvious until you see how many implementations treat data as an afterthought. Context management, retrieval-augmented generation, knowledge base curation, and data freshness all sit outside the model. Get them wrong and the model produces confident, well-structured nonsense.

The 43% data readiness failure rate is not about organizations lacking data. It is about data being siloed, stale, poorly formatted, or inaccessible to the systems that need it. Fixing this requires pipeline engineering, not a better model.

What data failure looks like in practice: agents that don’t have good data will make it up. Consumer-facing AI models are trained to be helpful and to please, which makes them prone to hallucination under data-poor conditions. They will also hand-wave. Ask an agent why something failed and it will explain that the issue was minor and should resolve by tomorrow. Press it and it will admit it only guessed that was true. It didn’t research the fault at all. Hallucinations and hand-waving are common occurrences in underpowered deployments, and if you don’t have the data, the AI fills the gap with plausible-sounding fiction. Your reports look rosy while your results don’t match. Content fails on authenticity because the agent has nothing real to draw from. Processes can’t be automated because nobody documented them in a form the agent can follow. The data is the hub. The AI runs within it. A model without a data foundation is a brain in a jar. Technically functional, not actually useful.

3. Quality Gates and Feedback Loops

How does the system catch errors before they reach a customer, a database, or a decision-maker? This is where implementation quality separates production-grade systems from demos.

Quality gates take many forms: automated validation checks, secondary model reviews, human-in-the-loop approval points, output scoring against known-good examples. Each one exists outside the model. Each one determines whether the system is trustworthy enough for real work.

Feedback loops close the circle. When the system produces a bad output and someone catches it, does that information flow back into the process? Systems that learn from their failures improve over time regardless of which model sits underneath. Systems without feedback loops make the same mistakes forever regardless of how capable the model is.

4. Integration with Existing Workflows

AI systems that require people to change how they work tend to fail. Systems that connect to existing tools, processes, and habits tend to succeed. This is a design decision, not a model capability.

Integration means the AI reads from the same databases, posts to the same channels, and follows the same approval processes that humans already use. It means the output lands where someone will actually see it and act on it, not in a separate dashboard nobody checks after the first week.

The integration challenge is underestimated because it is unglamorous. None of it makes for exciting demos. All of it determines whether the system survives its first month of actual use.

Two examples from our client work: one engagement connected a HubSpot CRM, a WooCommerce storefront, ad-hoc sales tracking spreadsheets in Google Sheets, and QuickBooks into a unified data layer, then built agentic systems on top to observe and track the full user journey across every entry point and sale endpoint in the ecosystem. Another connected a content production pipeline to a Mailchimp newsletter system and an Apollo outbound platform, then added agent automation to give AI systems full data access across the entire pipeline. In both cases, the integration work took longer than the agent build. And in both cases, the integration was the reason the system worked.

5. Observability and Governance

How do you know the system is working? When it stops working, how quickly do you find out? Who is accountable for the output?

These questions have nothing to do with model selection and everything to do with whether an AI system can run in production. Logging, monitoring, alerting, audit trails, permission boundaries, and escalation paths are all system design decisions. Skip them and you get a system that works in demos and fails silently in production.

Governance also means deciding what the AI is and is not allowed to do. Permission boundaries prevent the system from acting outside its scope, and escalation paths route edge cases to humans instead of improvising answers. These guardrails exist in the system architecture. The model does not impose them on itself.

The Model Swap Test

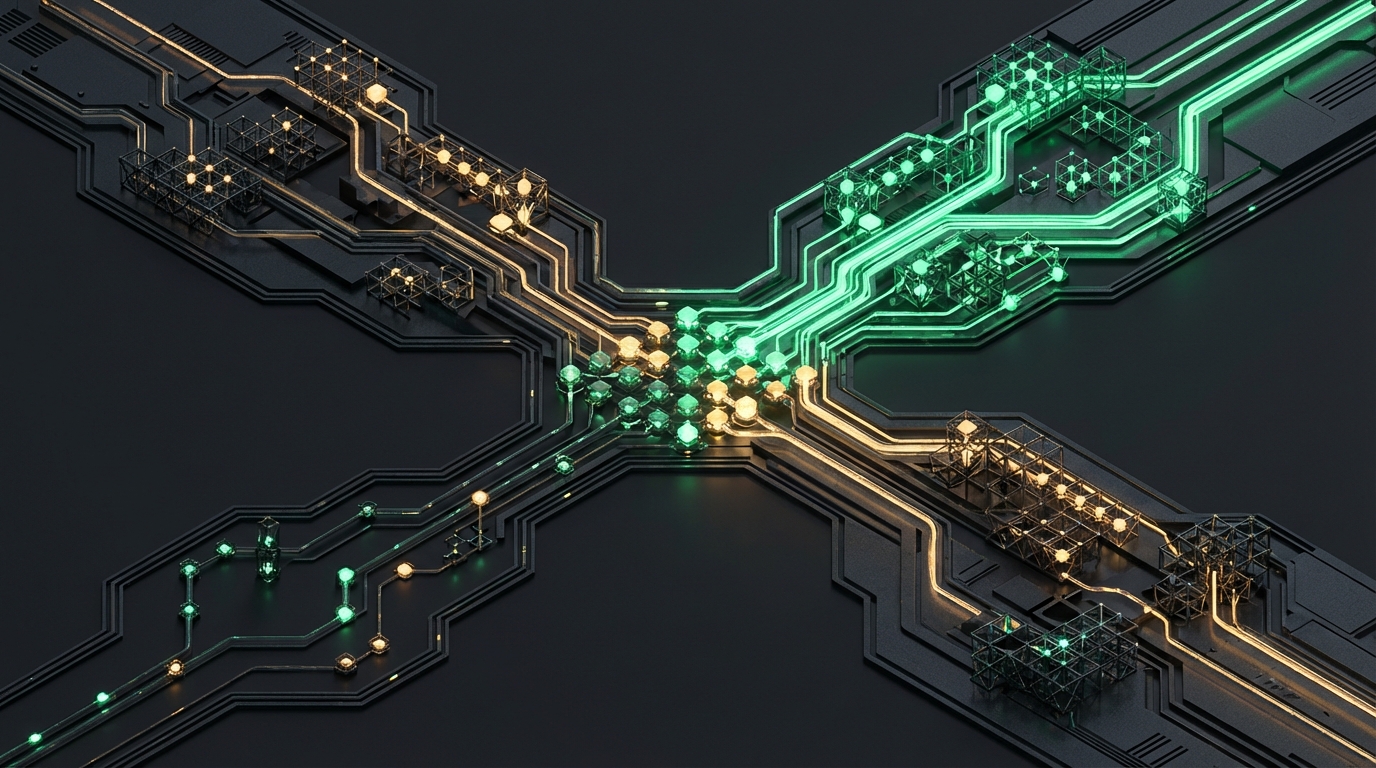

We run a multi-agent production system at Fountain City where different models handle different types of work. Over the past years, we have tested models from Anthropic (Opus, Sonnet, Haiku), Z.ai (a Chinese AI provider; GLM-4.7, GLM-5, GLM-5-Turbo), Google (Gemini 3.1 Pro, Gemini 3 Flash, Gemini 2.5 Flash), xAI (Grok) and OpenAI.

The results reveal something important about where value actually lives in an AI system.

For operational workflow tasks, the kind of structured step-by-step work that forms the backbone of most business processes, swapping between Anthropic and Z.ai models produces no meaningful difference in output quality. The orchestration layer handles the transition cleanly because the system design, not the model, determines the result. The cost profile changes. The outcome does not.

For work requiring deep analysis, complex instruction-following, and nuanced writing, model choice matters more. We found Opus outperforms other options for tasks requiring both power and precision. But even here, the system architecture determines whether that capability translates into business value. An excellent model with poor orchestration still produces inconsistent results. A good model with excellent orchestration produces reliable ones.

Cost optimization through smaller models backfired in practice. We initially used cheaper models for simple validation tasks, and the lower per-call cost seemed like smart economics. But the cheaper models produced more false positives and false negatives, which broke downstream operations and created more work than they saved. We removed all low-cost models from the pipeline as a policy decision. Model selection matters for specific capability requirements, but it is a tactical optimization made after the system design is solid.

We also learned that not all models are interchangeable in practice, despite similar benchmark scores. Google’s Gemini models, as of this writing, excel at domain knowledge tasks like software architecture analysis and scientific modeling. They struggle with the tight workflow execution and reliable tool use that agent systems require. OpenAI’s strongest current model for agentic work, GPT-5.4 Codex (as of this writing), is excellent for coding but is not what we reach for when building operational agent workflows. Each provider has real strengths. The system design determines whether those strengths reach the business process where they matter.

Cross-provider fallback policies handle outages automatically. If one provider is unavailable, the system routes to an equivalent model from another provider, or pauses and waits if the task requires a specific capability level. This resilience exists in the architecture. No single model makes the system fragile or robust. The design does.

What to Ask Before You Choose a Model

If you are evaluating an AI implementation, whether building internally or hiring a partner, these questions will tell you more about the likely outcome than any model benchmark.

- What happens when the model gets updated or deprecated? If the answer involves rebuilding, the system design is too tightly coupled to a specific model. Well-designed systems treat the model as a swappable component.

- How does the system handle errors or hallucinations? Every model hallucinates. The question is whether the system catches it before the output reaches someone who acts on it. If the answer is “the model is very accurate,” the system does not have quality gates.

- Can you swap the underlying model without rebuilding? This is the clearest test of system design quality. If the model is deeply entangled with the business logic, every model update becomes a reengineering project.

- How does data flow in and out of the system? Vague answers here predict data readiness failures. You want to hear specifics: what sources, what formats, how often, what validation happens at ingestion.

- Who monitors the system and how do you know it is working? Production AI systems need observability. Dashboards, alerts, logs, and regular reviews. If nobody is watching, failures accumulate silently.

- What is the human escalation path when the AI gets stuck? Autonomous does not mean unsupervised. Every system needs clear boundaries for when human judgment takes over. If the answer is “the AI handles everything,” the system has not been tested in production.

- How does the system improve over time without manual intervention? Feedback loops, learning from corrections, and self-monitoring separate production systems from one-time implementations. A system that cannot improve is a system you will eventually replace.

Notice that none of these questions ask which model the system uses. That is the point. The answers to these seven questions predict implementation success far more reliably than any model comparison.

When Model Choice Does Matter

This is not an argument that model selection is irrelevant. It matters in three specific contexts.

Cost optimization. Different models have different price points, and for high-volume operations, the cost difference between a premium model and a mid-tier one is significant. But this is a budgeting decision made after the system is designed, not a strategic decision that drives the architecture.

Specific capability requirements. Some tasks genuinely require frontier-level models. Complex analysis with large context windows, nuanced writing that must match a specific voice, multi-step reasoning across ambiguous inputs. For these, the top-tier model is worth the cost. For the 80% of tasks that are structured and procedural, it is not.

Regulatory and data residency constraints. Some organizations need their data processed by specific providers, in specific regions, under specific compliance frameworks. This is a legitimate model-selection driver that has nothing to do with capability and everything to do with governance.

In each case, model choice is a tactical decision made within the constraints of a system design. It is not the strategic decision that determines whether the implementation succeeds.

Starting With the Right Question

Shifting the question from “which AI model?” to “which system design?” reframes the evaluation from vendor comparison to architecture planning, and moves the conversation from benchmarks to business outcomes.

At the beginning of an AI implementation, start with the five system decisions described above. Get the orchestration, data pipelines, quality gates, integration, and observability right. Then choose a model that fits the requirements those decisions create.

For vendor evaluations, use the seven questions above as your diagnostic. The answers will tell you whether a partner thinks in systems or in models. Partners who think in systems can adapt when the technology changes. Partners who think in models will be back asking for a rebuild every time a new release drops.

Existing implementations that are not working deserve a system audit before a model swap. The 95% failure rate is a design problem, and design problems have design solutions.

We have been building autonomous AI systems long enough to know that the model is the part that improves on its own. Every six months, a new release makes the model layer better, faster, and cheaper. The system design is the part that requires deliberate engineering. It does not improve by itself. And it is the part that determines whether the model’s capabilities translate into business results.

Fountain City offers a 100% money-back guarantee on initial agent builds. That guarantee is on the system, not the model. We guarantee that the architecture, orchestration, quality gates, and integration will produce the outcomes described in the scope. We do not guarantee that a specific model will be the one running six months from now, because it probably will not be. And that is fine, because the system is designed to absorb that change without disruption.

A well-designed system succeeds across model changes. That is the problem worth engineering for.

Frequently Asked Questions

What is AI system design?

AI system design is the architecture that surrounds a language model: how tasks are decomposed, how data flows in and out, how errors are caught, and how the system connects to existing business processes. It determines whether an AI implementation works reliably in production or fails after the pilot phase.

Why do most AI projects fail?

System-level issues, not model quality, account for the vast majority of AI project failures. MIT’s NANDA initiative found that 95% of generative AI pilots never reach production. The leading causes are data readiness gaps (43% according to CDO Insights via Informatica), poor workflow integration, and missing quality gates and monitoring.

Does it matter which AI model I choose for my business?

Model choice matters for cost optimization, specific capability requirements, and compliance constraints. It matters less than most executives assume for overall implementation success. A well-designed system produces reliable results across multiple models. A poorly designed system underperforms regardless of which model powers it.

What is the difference between AI system design and model selection?

Model selection is choosing a specific language model (GPT-5, Claude, Gemini). System design is everything else: orchestration architecture, data pipelines, quality gates, workflow integration, monitoring, and governance. System design determines whether the model’s capabilities reach the business. Model selection determines the ceiling of what is technically possible within that system.

How do I evaluate an AI vendor’s system design quality?

Ask what happens when the model changes, how errors are caught, whether the model can be swapped without rebuilding, how data flows through the system, and who monitors it. Vendors focused on system design will have specific, detailed answers. Vendors focused on model capabilities will pivot to benchmarks and demo results.

Can I switch AI models without rebuilding my system?

Yes, if the system is well-designed. A properly architected AI system treats the model as a swappable component. We regularly move workflows between models from different providers with no change in outcome quality for structured operational tasks. If swapping models requires significant rework, the system has a design problem.